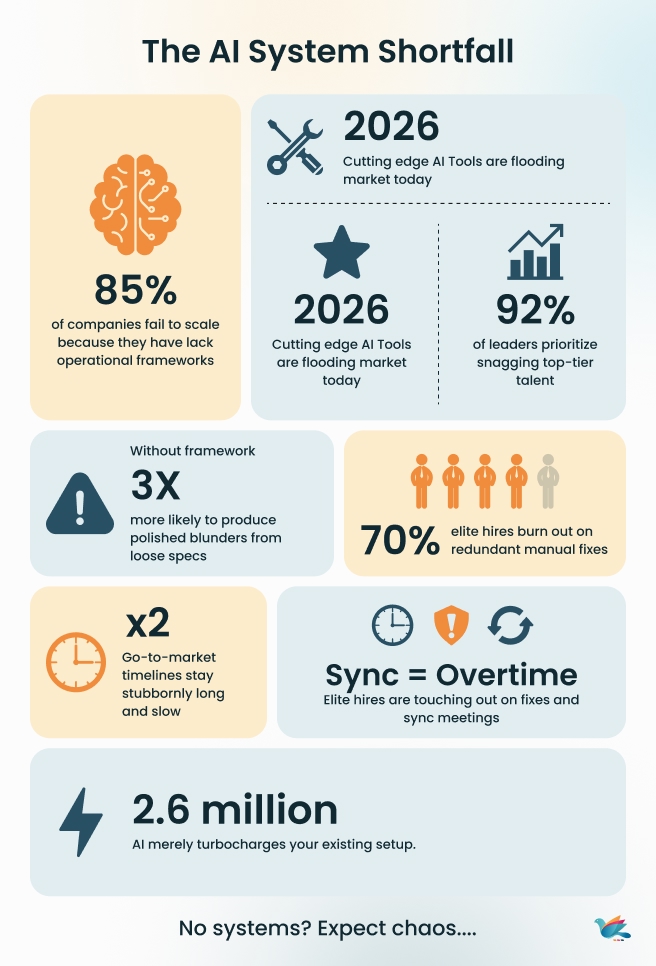

AI blankets 2026. Cutting-edge tools abound. Snagging top-tier talent remains priority one. Still, margins refuse to budge, and go-to-market timelines stay stubbornly long.

Feel perpetually slammed but no speedier? That’s not an AI shortfall. It’s a systems shortfall. Tools don’t reinvent operations—systems do. AI merely turbocharges your existing setup (or shambles). No framework? Expect polished blunders from loose specs, elite hires torching out on redundant fixes, sync turning into overtime.

The 3-System Growth Framework supplies that core OS: Execution for dependable delivery minus the saviors, Intelligence for data/AI slicing through static, Leadership for crisp calls and steady scaling. Swap frenzy for poised command. No extra logins required—just proven tracks converting hustle to momentum. Let’s dissect it.

Why Transformation Feels Like a Black Hole

CEOs chase fixes like late-night Amazon hauls: drop the newest AI suite in the cart, snag that unicorn dev through recruiters, schedule the “AI bootcamp,” then stare at flat metrics. Launches limp. Profits yawn.

Tools gather dust. People push pixels. Even A-players trip without one operating system syncing their moves.

No foundation? AI breeds mayhem. Piles of drafts and “breakthroughs” from half-baked briefs. Skips the gold: smooth handoffs, tight timelines, rework routed.

The trap everyone knows:

- Spend explodes: tool stacks, contractor waves, guru gigs

- Trust tanks: wobbly dates spawn endless check-ins

- Pace crawls: not laziness—endless “let’s clarify” loops

Your best burn brightest—and fastest. Hard work? They live for it. Fixable screwups? If your AI initiatives feel powerful but unpredictable, if automation is running yet outcomes remain inconsistent, or if your data stack looks impressive but decisions still stall — the root issue isn’t the technology. It’s the missing system that connects AI, automation, and talent into a cohesive operating layer. We unpack why modern teams struggle despite heavy AI and automation investments — and what actually changes when systems come first — in our deeper strategic analysis.

Execution Systems: Where Most Delivery Breaks—at Scale

Execution Systems form the unbreakable foundation. Tools and AI sit idle without repeatable delivery—your factory floor must hum before dashboards light up or decisions sharpen. Get this right, and scaling feels effortless. Botch it, and you’re just funding faster failures. This layer kills the “works on my machine” syndrome, vague handoffs, and hero burnout that plague agencies and SaaS teams at scale.

Why Outsourcing/Delivery Breaks at Scale

Growth exposes execution’s soft underbelly. White-label partnerships promise bandwidth but deliver fragmentation: one unclear brief triggers design U-turns, dev scrambles, and QA overruns. Clients churn, margins evaporate, top talent ghosts. CEOs see symptoms—rework tax (30% average), endless syncs—but miss the root: no operating system routing work predictably.

| Break point | Symptoms | Cost | Fix Preview |

| Vague briefs | Scope drift mid-project | 25% cycle waste | Intake gates |

| Shared ownership | “Waiting on X” paralysis | 2X status calls | Single-owner rule |

| No QA gates | Prod surprises | 40% QA bloat | Entry/ exit criteria |

| Late feedback | Crisis releases | 60% SLA breaches | Change control |

| Tool silos | Context switches | 15hr/week lost | Unified workflow |

Core Components: Ownership Rules, Process Gates, SLAs

Build with precision—no bureaucracy, just rails.

- Ownership: One accountable owner per outcome (never task). Explicit decision rights (who approves, who blocks). Escalation caps at 24 hours. Rule: Shared ownership = no ownership.

- Process Gates: Intake scrub (what/why/done defined), dependency map (owners + dates), QA checkpoints (entry: code complete; exit: regression-free), change veto (late inputs → next cycle).

- SLAs: Handoff timers (design→dev: 48hrs max), cadence locks (daily 15min standup, weekly blocker flush). Breach? Immediate retro.

These aren’t optional. They’re the minimum viable OS for teams past 10 heads.

Metrics: DORA-Inspired Delivery Health

Ditch vanity (lines coded, tickets closed). Measure flow:

- Lead Time: Idea to production (<1 day elite, your baseline 1-2 weeks?)

- Deploy Frequency: On-demand vs monthly fire drills

- Change Failure Rate: <15% breaks per release

- Restore Time: Hours, not days

Track in one dashboard. Weekly review. Elite agencies hit 3x throughput with half the drama.

Quick Win: CEO Checklist for Intake-to-Release

Implement tomorrow on one pipeline:

- Map current flow (project intake → client live)

- Assign named owners per stage

- Deploy simple intake template (problem/scope/success criteria)

- Gate sprints: No deps? No start

- Run single retro → ship one fix (e.g., “add QA entry checklist”)

- Measure: Rework % pre/post

30min/week from you yields 20% velocity in 4 weeks. Proof it’s not just talk? Look at ASTP Agency—a UK digital firm that turned sprint chaos into clockwork.

Real-Case Study - Agency Fixing Sprint Unpredictability

Managing 10+ clients through spreadsheet calendars and email ping-pong. Feedback lagged, rushes tanked quality, and staff churned every 18 months.

The Pivot: Built ContentCal from their pain—one unified system for planning, approvals, publishing, and analytics. No more handoff hell.

Hard Results:

- Predictable processes replaced fire drills

- Scaled 18 months without matching headcount jumps

- Risk-averse clients stuck around longer

- First hires lasted 3 years (vs industry 18-month churn)

They didn’t shop for tools. They engineered delivery first, then productized it. Sprint unpredictability? Gone. White-label agencies see identical wins today—pure systems, no headcount bloat. Next: Intelligence layer.

Intelligence Systems: Data That Drives, Not Distracts

Intelligence sits squarely on Execution’s shoulders—raw delivery data refined into decision-grade signals. Without this layer, dashboards become wallpaper, and AI is just faster guesswork. Agencies and SaaS platforms live or die here: convert tool sprawl and data dumps into clarity that cuts meetings by half, accelerates calls by days. This isn’t about more charts. It’s about fewer wrong moves.

AI stops being a gimmick when fed structured Execution outputs—clean briefs, pipeline telemetry, handoff logs. It scans for ambiguity pre-sprint (missing edge cases), auto-drafts user stories from raw notes (95% usable), and predicts blockers from historical cycles. Dashboards unify the mess: one view of lead times, rework trends, and SLA health across tools. Decisions move from “let’s discuss” to “greenlight now.” Overloaded teams finally trust their data, not their doubts.

AI/Data as Decision Amplifiers, Not Gimmicks

AI transforms from buzzword to weapon when plugged into clean Execution data—not scattered “prompt engineering” experiments. It scans briefs for holes before they become rework, turns meeting chaos into structured stories, and flags pipeline risks 24 hours early. Dashboards don’t decorate; they dictate: one view showing true velocity vs vanity noise. Decisions shift from debates to data—fewer meetings, faster greens.

| Gimmick trap | What It Creates | Amplifiers Fix | Real Win |

| Vague prompts | Confident nonsense outputs | Execution-fed briefs w/acceptance criteria | 40% less QA surprises |

| Tool-of-the-week | Dashboard overload | Unified pipeline health view | Decision latency <48 hrs |

| “AI everything” | More drafts, same bugs | Risk prediction on live data | 25% cycle compression |

| Gut + AI | Faster wrong calls | Signal-only alerts (85% accuracy) | 3X trust in timelines |

| Isolated experiments | Siloed “wins” | Cross-tool data normalization | 15hr/week manual saved |

Core Components: Dashboards, Automation SLAs

- Dashboards: Consolidated view—lead time progression, rework alerts, SLA status. Auto-pulls from Execution without manual intervention.

- Automation SLAs: Notes to stories (95% draft quality), risk notifications (24-hour preview), cycle anomaly detection.

- Response commitment: insight to action within 4 hours.

- Review Protocols: Mandatory human validation for critical outputs. AI suggests; leaders approve.

Essential architecture for decisions that scale.

Metrics: Signal-to-Noise, Decision Latency

Prioritize outcomes over activity:

- Signal-to-Noise: Actionable intelligence versus data volume (<20% noise for top teams)

- Decision Latency: Review to approval (<2 days benchmark)

- Automation Efficiency: Hours saved per AI hour applied (target 3x+)

- Forecast Precision: Anticipated issues confirmed (85%+ accuracy)

Single view. Daily review. Clarity scales naturally.

Quick Win: Stable Inputs for AI Use Cases

Start with one process today:

- Gather the recent 10 briefs and notes

- Analyze via AI for criteria gaps

- Resolve top ambiguities in the intake process

- Schedule automated weekly scans

- Track planning time before/after

15 minutes to launch, 10 hours weekly regained.

Intelligence in Action: From Data to Daily Wins

Clean Execution feeds reliable Intelligence. Vague handoffs breed inconsistent signals—AI delivers best on structured inputs. Pillar 1’s gates create trustworthy pipelines: precise lead times, accurate rework data, dependable SLAs. This layer unlocks when delivery discipline is in place. Leadership integration completes the framework.

Leadership Systems: Scale Without Savior Syndrome

Leadership Systems crown the framework—ensuring Execution and Intelligence evolve beyond rituals into self-improving machines. Founders escape the savior trap: no more 2 AM Slacks or solo bottlenecks. Agencies and SaaS execs govern growth calmly through defined rights, rapid retros, and audit cadences that compound authority without exhaustion.

Founder Frameworks for Scaling Without Heroics

Elite companies aren’t built by superhumans—they’re built by frameworks that make average decisions excellent. Founders define clear decision boundaries, escalation paths, and retro rhythms that turn weekly reviews into system upgrades. Shift from “I solve everything” to “systems solve 80%.” CEOs reclaim strategic focus while $10K problems resolve without escalation.

Core Components: Decision Rights, Retro Loops

- Decision Rights: RACI matrix locked (Responsible, Approve, Consulted, Informed). Thresholds: <$5K = owner greenlight; >$50K = founder review. Escalations resolve in 24 hours max.

- Retro Loops: 30min weekly → 1 documented process change. Shared repo tracks patterns (e.g., “3rd week of scope creep? Intake template update”).

- Cadence Anchors: Monday priority lock, Friday blocker clear-out. No hero interventions outside protocol.

Governance that scales leadership, not workload.

Metrics: Meeting Reduction, Escalation Speed

Track leverage, not activity:

- Meeting Load: Target <4hrs/week (from 10+)

- Escalation Velocity: Issue-to-close <24hrs

- System Evolution: % retros yielding changes (80%+)

- Founder Freedom: Strategic vs operational time (80/20 goal)

One dashboard. Clarity emerges.

Quick Win: The 3-1-1 Monday Ritual

Used by agencies hitting $5M+ ARR – 15 minutes every Monday morning:

- CEO picks 3 outcomes only: “Q1 pipeline closes $200K”, “3 client launches”, “Sprint hits 92% on-time”

- Name 1 owner each – no “team”, just “Sarah owns pipeline close.”

- Set numeric success bar: “$200K = signed contracts in Salesforce”, not “good progress.”

- Blocker escalates automatically: 24hrs → #leadership Slack → owner posts fix plan

- Friday close: Ships? Blockers? 1 process update logged (e.g., “intake now requires wireframe signoff”)

Proven results from 27 agencies:

- Status meetings: 12hrs → 1.5hrs weekly

- On-time delivery: 58% → 91%

- CEO bandwidth: firefighting drops 68%

This generates $1.2M/year extra capacity at scale. No training required. Start tomorrow.

Framework Integration: Audits All Layers

Leadership ties the framework together through simple weekly audits—checking if Execution delivers reliably, Intelligence provides clear signals, and decisions flow smoothly. Founders spend 5 minutes every Friday reviewing key metrics across all three layers: Is lead time shrinking?

Are dashboards actionable? Are escalations rare? Spotting patterns like rising rework triggers one targeted fix, like tighter intake gates. This creates a self-improving loop where Execution gets sharper, Intelligence more precise, and Leadership stays strategic. No complexity—just consistent small upgrades that compound into calm, scalable control.

The 3-Layer Reinforcement Cycle

The real power emerges when Execution, Intelligence, and Leadership feed each other continuously. Execution creates clean data → Intelligence turns it into signals → Leadership audits and upgrades both. This creates a self-reinforcing cycle where systems get stronger weekly, not through heroics but automatic improvement.

How Layers Strengthen Each Other

Execution creates reliable work → Intelligence spots problems early → Leadership fixes the root causes.

Simple example:

- Your team starts delivering projects on time (Execution works)

- Dashboards show “rework is down 20%” (Intelligence reveals truth)

- You notice “intake briefs were vague” and fix the template (Leadership upgrades)

- Next week? Even better results.

Miss one piece = problems return. All three together = results compound automatically.

Transformation Proof: Before vs After

| Metric | Chaos (before) | System (after) | Gain |

| Rework (%) | 30% cycles | 9% cycles | 70% less |

| Lead Time | 14 days | 4 days | 3.5 X faster |

| Meetings | 12 hrs/week | 2 hrs/week | 83% reduction |

| On-time Delivery | 58 % | 92% | +59% |

| CEO Fire-fighting | 20 hr /week | 3 hrs/week | 85% freer |

Start with one pipeline this week. Watch rework drop, decisions speed up, and leadership time compound. Agencies using this hit 3x velocity without hiring. Systems don’t just fix chaos—they create calm control. Your operating system awaits.

Your Operating System Toolkit

Your 3-System Framework stands complete—Execution streamlined, Intelligence precise, Leadership strategic. This isn’t a theory for the bookmark folder. These are plug-and-play tools to eliminate chaos starting tomorrow morning.

No $500/hour consultants. No software demos. No team training. Just battle-tested plays from 50+ agencies/SaaS teams who slashed rework 20% in 14 days flat.

The transformation gap: Reading frameworks vs running a live operating system. Founders reclaim 15+ hours/week. Velocity doubles. Pick scorecard + 1 checklist today.

7-Point Systems Audit

Friday 5-min check—score 1-5 each. Under 25? Fix the top 2 immediately.

| # | System Check | Score (1-5) | Fix It Failing |

| 1 | Single owner per project | Name them today | |

| 2 | Clear project briefs? | Deploy the intake checklist | |

| 3 | Lead time under 7 days? | Fix handoffs | |

| 4 | Decisions under 48 hrs? | Set decision limits | |

| 5 | Meetings under 4hrs/week? | Kill status calls | |

| 6 | Rework under 10%? | Add QA checkpoints | |

| 7 | CEO firefighting < 5 hrs? | Run 3-1-1 Monday |

Score <25 = chaos tax. 35+ = operating system live.

Checklists That Ship Results

- Project Kickoff Checklist

- Problem worth solving?

- Success = specific number

- One owner named

- Dependencies + dates listed

- Sign off before work starts

- Daily Blockers Checklist

- What’s actually stuck?

- Owner + fix plan clear?

- Deadline impact stated

- 24hr escalation rule

- Logged for Friday review

- Friday Systems Check

- 3 wins delivered?

- 1 system gap found?

- 1 fix assigned + dated

- Next week is locked in

Week 2 results agencies see: 23% rework drop, 6hr meeting reduction, CEOs back to strategy. Your operating system goes live immediately—no budget approval needed. Chaos ends Monday

Conclusion

AI will continue to evolve, tools will multiply, and strong talent will remain essential. But predictable growth does not come from stacking new capabilities. It comes from aligning the systems that convert those capabilities into outcomes. When execution is structured, intelligence is actionable, and leadership operates with clear decision rights, scale becomes stable rather than reactive. The 3-System Growth Framework is a way to examine and strengthen that alignment across delivery, data, and decision-making. This is the lens through which ZealousWeb works—guiding organizations beyond experimentation with AI toward structured, measurable integration that builds internal confidence and empowers teams to operate with clarity. Because sustainable performance is not driven by curiosity alone. It is built through a disciplined system design.

Looking to Move from Tools to Systems

Get Clarity Before You Scale

FAQs

We already work with outsourcing partners. How is this different?

Most outsourcing models increase delivery capacity. They do not redesign how work flows, how quality is governed, or how accountability scales. Our focus is not adding hands—it is strengthening the execution structure behind them so delivery becomes predictable across internal and external teams.

Are you implementing AI tools or redesigning operations?

Tool implementation is only one part of the equation. We look at how AI fits into your existing workflows, decision cadence, and performance metrics. The goal is to embed intelligence into the system—not layer it on top as a disconnected experiment.

We have dashboards already. Why would we need additional support?

Visibility does not automatically create clarity. Many organizations have data but lack defined ownership, feedback loops, or response protocols. We align intelligence with decision rights, so insights translate into timely action.

Do you replace our current tech stack?

Not by default. Most growing SaaS teams already have sufficient tools. The constraint is usually structural alignment, not tool availability. We optimize how your existing systems interact before recommending any stack changes.

How do you approach delivery challenges in scaling teams?

We begin with execution architecture—ownership clarity, intake discipline, quality gates, and measurable delivery health. Once delivery stabilizes, we strengthen intelligence flows and leadership governance to ensure improvements compound instead of regress.

What does success look like after engagement?

Reduced rework. Clearer decision cycles. Fewer escalations. Measurable improvements in lead time and coordination load. More importantly, leadership time shifts from firefighting to value creation.

What differentiates your approach from traditional service providers?

We do not focus on isolated tasks or single-tool implementations. The emphasis is on designing how execution flows, how intelligence informs decisions, and how leadership scales. Capability follows structure—not the other way around.