Somewhere right now, an organization is onboarding its third white-label development partner in eighteen months.

The brief looks cleaner this time. The intro call went well. The samples are solid. And there’s a quiet, collective agreement among the team not to jinx it by saying what everyone is thinking — this feels exactly like the last one did in week two.

The partners weren’t wrong. The talent was real. The intentions were good. What was missing had nothing to do with either. It never does.

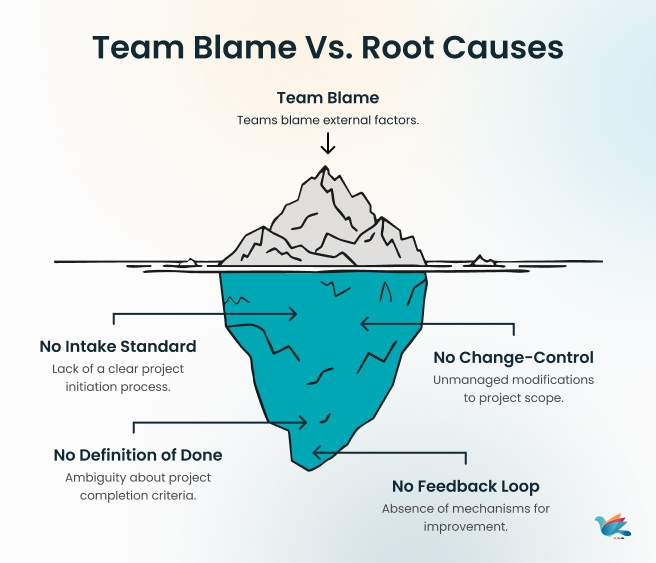

White-label delivery doesn’t fail because growing teams keep picking bad vendors. It fails because most hand a capable partner a structurally broken engagement — no intake standard, no shared definition of done, no change-control policy, no feedback loop that actually closes. Just capable people on both sides doing their best inside an arrangement that was never designed to hold at scale. And when the symptoms surface — missed timelines, rework disguised as revisions, coordination that multiplies instead of simplifies — the answer is always the same: find someone new.

Here’s the part nobody puts in the retrospective: the system was always the problem. Not the partner.

Then AI entered the room. Which, for a fragmented white-label outsourcing model, is a little like handing someone a faster car when the road itself is the issue. Velocity without structure isn’t progress. It’s just expensive chaos arriving sooner.

This guide is for growing teams, scaling companies, and organizations ready to do the less glamorous, more permanent thing: build an actual white-label delivery system — with named owners, defined handoffs, and an operating model that makes your white-label agency partner feel like a genuine extension of your team.

What follows is the blueprint. Framework, workflow, evaluation criteria, a white-label onboarding process that doesn’t rely on goodwill, and a 30-day rollout plan built on one principle: structure first, everything else after.

But before the blueprint, it’s worth understanding exactly how the system breaks — because until you see the failure pattern clearly, every new partner is just a new variable in the same broken equation.

What a White-Label Delivery System Actually Means (And What It Doesn't)

The term white-label agency services gets used loosely. Most teams interpret it as: find a partner, brief them, get work back, put your name on it. Which is accurate — but only in the way that “driving” accurately describes both a Sunday morning cruise and rush hour on a highway. Technically the same. Practically, worlds apart.

A white-label delivery system isn’t about who does the work. It’s about how the work moves — consistently, predictably, and without the client on the other end ever feeling the friction that happens behind the curtain. That’s the standard. And that standard requires more than a capable white-label development partner. It requires a shared operating model.

The Difference Between a White-Label Vendor and a White-Label System

Most engagements start as vendor relationships and stay there — not because the partner wasn’t capable, but because nobody designed the engagement to be anything more. The distinction matters more than most teams realize until something breaks.

| White-label Vendor | White-label System | |

| Ownership | Whoever follows upthe most | Named the owner per the outcome, both sides |

| Intake | Brief over chat or email | Standardized template, defined criteria |

| Definition of done | Assumed and debated | Written, agreed, enforced upfront |

| Change management | “Sure, we can adjust that.” | Documented trade-off — scope, time, quality |

| Quality control | Checked at delivery | QA gates at entry and exit |

| Feedback loop | Post-project, if things went badly | Weekly cadence with retros that update the system |

| Scale behavior | Gets harder | Gets more predictable |

Why Most White-Label Arrangements Break at Scale

Here’s the uncomfortable pattern: most outsourced delivery models work fine at small volume. One project, two projects — the relationship runs on goodwill, familiarity, and the natural diligence of people who are new to working together and trying to make a good impression.

Then scale enters.

More clients. More concurrent projects. More stakeholders with opinions arriving at inconvenient moments. And what held the engagement together at low volume — informal communication, shared context, one person who just knew how things worked — starts to fracture quietly.

The first sign is rarely a missed deadline. It’s a meeting. Then another. Status updates that exist because nobody trusts the system of record. Escalations that happen because ownership was never formally defined, so decisions travel upward until someone with enough authority makes the call and everyone moves on — until the next one.

Rework follows. Not dramatic, visible rework. The kind that hides inside “minor fixes,” “final polish,” and “just one more round of amends.” The kind that doesn’t show up in the budget summary but absolutely shows up in the margin.

And at the center of all of it is a simple structural gap: the white-label agency services arrangement was never given an operating model. It was given a contact, a Slack channel, and an optimistic timeline.

At scale, optimism isn’t an operating model. A system is.

Why White-Label Delivery Fails Without an Execution OS

Most of what breaks in a white-label delivery engagement was never a vendor problem to begin with.

But that’s the easiest place to point. A missed deadline lands and the first question is — is this the right partner? Not — did we give them a clear enough brief? Not — did anyone define what done actually looks like?

So teams switch partners. And six months later, the same things break. Different name on the invoice. Same problems in the sprint.

The issue was never the partner. It was the absence of a system holding the engagement together. No clear ownership. No process that travels with the work. No feedback loop that catches problems before they become escalations.

Without that foundation, outsourcing execution failures aren’t a surprise. They’re a guarantee.

The 3 Hidden Failure Points in White-Label Delivery

Scaling agency delivery rarely breaks all at once. It leaks. Slowly. Across three gaps that most teams never formally name — which is exactly why they keep showing up, project after project.

- Rework Loops. The brief wasn’t clear at the start, so the work was corrected at the end. Repeatedly. It never gets logged as rework — it hides inside “minor amends” and “final polish.” Until someone’s margin quietly disappears.

- Coordination Tax. Three people updating three different places, nobody sis ure which one is accurate. So a meeting gets called. Then another. At scale, the meetings become the system — and that’s the problem.

- Ownership Gaps. Work passes through multiple hands — your team, the delivery partner, sometimes the end client. When something falls through, the honest answer is usually: nobody was formally assigned to catch it. Not laziness. Just a structural blind spot nobody designed out of the process.

These three gaps don’t stay separate for long. Rework creates coordination overhead. Coordination overhead blurs ownership. Blurred ownership creates more rework. The cycle runs quietly — until a deadline makes it loud.

What "Hero-Dependent" Delivery Looks Like in a White-Label Context

Every team has that one person. The one who already knows the client’s preferences without being told. Who spots the problem in the brief before it becomes a problem in production? Who quietly picks up what fell through the gap and never makes a thing of it.

Things run well when they’re around. Everyone knows it. Nobody says it out loud.

That’s hero-dependent delivery. And it feels fine — until it isn’t. That person takes leave. The volume doubles. And suddenly the client experience that felt smooth starts to feel unreliable — not because anything changed, but because one person was carrying what a system should have been holding.

In a white-label development partner setup, this is even harder to spot — because that person is on the partner’s side. You don’t always know they exist. You just notice, eventually, when they’re not there. A real delivery system makes consistent execution the default. Regardless of who is in the room that week.

How AI Makes It Worse Without a System Underneath

AI works best when the inputs are clear. Feed it a well-written brief with defined acceptance criteria and it produces something useful. Feed it a vague brief with assumed context, and it produces something confident-looking that is still wrong — just faster and better formatted than before.

Now apply that to a white-label outsourcing setup where the brief travels across two organizations, gets interpreted twice, and the definition of done was never written down. AI doesn’t fix that gap. It accelerates it. The misalignment just surfaces later — usually in QA, usually at the worst possible time.

In a white-label quality control workflow without structure underneath, AI makes rework arrive with more confidence and fewer warnings.

The fix isn’t less AI. It’s a sequence. Build the system first — stable inputs, clear ownership, defined process. Then bring AI in. That’s when it actually does what the case studies promise.

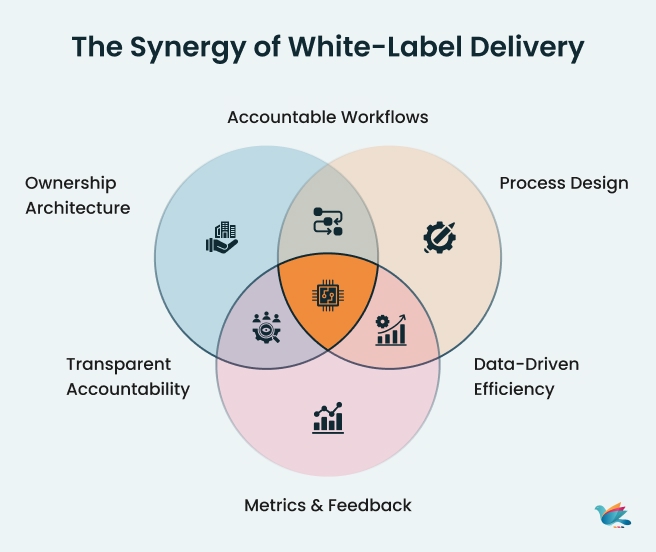

The 3-Layer Execution OS for White-Label Delivery

Every white-label project management conversation eventually lands on the same question: why does this keep breaking at scale?

The answer is almost always the same. Not the wrong partner. Not the wrong tools. A missing operating model.

An Execution OS is not a complicated thing. It’s three layers — ownership, process, and metrics — working together so delivery doesn’t depend on who’s having a good week. Each layer solves a specific problem. Together, they make the outsourced team workflow predictable, repeatable, and calm.

Here’s what each one looks like in practice.

Layer 1 — Ownership Architecture (Who Owns What Across Two Organizations)

Most white-label delivery engagements have plenty of people involved. What they rarely have is clarity on who is accountable for what — especially when work crosses from your team to the partner’s team and back again.

That gap is where decisions stall. Where escalations happen. Where the same question gets asked three times by three different people because nobody was formally assigned to answer it.

Ownership architecture doesn’t need to be complex. It needs to answer three things clearly:

- Who owns each outcome — not each task, but the result the task is meant to produce. One name. Not a team. Not “both sides.” One person who is accountable if it doesn’t happen.

- Who can make decisions — and how fast? In a cross-organization setup, decision latency is a real delivery cost. If every call needs four people in a room, the process will always move more slowly than the timeline.

- How escalations travel — when something breaks or changes, where does it go, who resolves it, and by when. Defined upfront. Not figured out in the moment.

When ownership is clear across both organizations, the coordination tax from H2 2 drops almost immediately. Not because people work harder, but because the structure stops creating confusion.

Layer 2 — Process Design (Intake → Sprint → QA → Delivery)

If Layer 1 answers who, Layer 2 answers how. This is where most white-label outsourcing arrangements have the biggest gap — and where the most rework originates.

Four things make it hold at scale: Intake standard. One template. Defined acceptance criteria. What done looks like — written down before anyone starts.

Dependency mapping. Every upstream dependency named before the sprint begins. Owner. Due date. Not ready means it doesn’t enter. Simple rule, significant impact.

QA process white-label standard. Quality gates built into the workflow — not bolted on at the end. Work moves forward when it meets the criteria. Not before.

Change control. Late change? Formal request. Documented trade-off — scope, timeline, or quality. One moves. The stakeholder decides. Nothing on assumption.

Layer 3 — Metrics & Feedback Loops (DORA + White-Label SLAs)

A system without a feedback loop isn’t a system. It’s a process that runs until something breaks — and then gets fixed manually, without updating what caused it.

This is the layer most white-label project management setups skip. Because measuring delivery health feels like overhead — until it would have caught the problem you’re currently firefighting.

Two things make it work.

SLA for white-label delivery. Every handoff has a defined turnaround time — brief to intake, intake to sprint, sprint to QA, QA to release. When SLAs are set, delays surface early. Teams stop chasing and start managing exceptions instead.

DORA metrics for agencies. Four numbers that show delivery health over time — not opinions, not feelings:

- Lead time — brief to delivery

- Delivery frequency — how consistently work ships on schedule

- Change failure rate — how often delivered work comes back for correction

- Time to recover — how quickly the team resolves a delivery failure

The weekly delivery review is where both come together. Not a status meeting — a structured 30 minutes on blockers, rework causes, and SLA adherence. It ends with one system update. A process change. A new rule. Something that makes next week structurally better than this one. That’s the loop. And that’s what separates a delivery system from a delivery ritual.

How to Design the Intake-to-Delivery Workflow for White-Label Projects

This is where the Execution OS moves from concept to practice. A white-label workflow management system doesn’t need to be complicated — it needs to be consistent. The same steps, the same standards, every project. That’s what makes client delivery workflow predictable at scale.

Six steps. In order. No skipping.

Step 1 — Standardize the Brief & Intake Template

| What | Why It Matters |

| One intake template for every project | Eliminates interpretation gaps before work begins |

| Project objective + success criteria included | Everyone starts with the same definition of success |

| Stakeholder sign-off is required before the sprint | No work starts on an unapproved brief |

| Template lives in one shared location |

💡 If your briefs arrive over email, Slack, and verbal conversations — your intake isn’t standardized. Pick one format. Enforce it.

Step 2 — Define "Done" Before Work Begins

| What | Why It Matters |

| Written acceptance criteria per deliverable | Removes “that’s not what I meant” at review stage |

| Visual and functional standards documented | QA has a clear benchmark to measure against |

| Client approval conditions defined upfront | Prevents scope creep disguised as feedback |

| Definition of done signed off before sprint starts | No assumptions travel into execution |

💡 Most rework doesn’t start in delivery. It starts in the brief. Define done early — pay for it once.

Step 3 — Map Dependencies With Named Owners and Due Dates

| What | Why It Matters |

| Every dependency listed before the sprint begins | No mid-sprint surprises from missing inputs |

| Named owner assigned to each dependency | Due date set for every upstream input |

| Due date set for every upstream input | Delays surface early, not at the deadline |

| Unresolved dependencies block sprint entry | Work only starts when it can actually finish |

💡 A sprint that starts without dependency clarity isn’t a sprint. It’s optimism with a deadline.

Step 4 — Install Change-Control Rules (The Late-Change Policy)

| What | Why It Matters |

| Any post-sprint change becomes a formal request | Stops casual scope additions mid-execution |

| Trade-off documented — scope, time, or quality | Stakeholder owns the consequence, not the team |

| Urgent threshold defined upfront | Not everything is urgent. The rule decides, not the mood. |

| Change log maintained across both teams | Full visibility on what changed, when, and why |

💡 A sprint that starts without dependency clarity isn’t a sprint. It’s optimism with a deadline.

Step 5 — Set QA Entry & Exit Criteria

| What | Why It Matters |

| QA entry criteria defined per deliverable type | Work only enters QA when it’s actually ready |

| Exit criteria set before testing begins | Pass/fail is objective, not a judgment call |

| Outsourced QA process documented and shared | Partner team tests against the same standard |

| Defect severity levels are classified upfront | Prioritization is consistent across both teams |

💡 QA that runs without entry criteria isn’t quality control. It’s hoping for the best with extra steps.

Step 6 — Run a Weekly Delivery Review With Your White-Label Partner

| What | Why It Matters |

| 30-minute structured review — same time every week | Replaces ad-hoc chasing with a predictable cadence |

| Agenda: blockers, rework causes, SLA adherence | Covers what matters, nothing else |

| Ends with one system update — process, template, or rule | The review improves the system, not just the week |

| Both teams present — your side and partner side | Shared visibility closes the cross-org gap |

💡 A weekly review that produces no system update is just a status meeting. The update is the point.

The temptation is to skip steps. But every step you skip here shows up later — as a missed deadline, a rework cycle, or a client conversation you didn’t want to have. Found this useful? We break down execution frameworks, delivery systems, and white-label operating models — straight to your inbox, no noise.

Where to Embed AI in a White-Label Delivery System (And Where Not To)

AI works. That’s not the debate. The debate is when — and in a white-label delivery system, sequence matters more than speed.

Drop AI into a structured system and it accelerates outcomes. Drop it into a fragmented one and it accelerates confusion. The difference isn’t the tool. It’s what exists underneath it.

Once the Execution OS is in place — ownership clear, process defined, feedback loop running — there are exactly two places where AI in project delivery produces reliable, high-ROI results without adding noise.

High-ROI AI Use Cases in White-Label Delivery

Once the system is in place, there are two places where AI for agency workflows produces reliable results without adding noise:

- Ambiguity detection pre-sprint — AI reviews the intake brief and flags missing acceptance criteria, unclear edge cases, and unstated assumptions before they become QA defects. Catching ambiguity before work starts costs nothing. Catching it after delivery costs a sprint.

- Notes → User stories + acceptance criteria — AI converts raw planning meeting notes into structured user stories with defined acceptance criteria. The owner reviews and approves before the sprint start. AI handles the formatting, humans handle the judgment.

Both use cases work for the same reason: stable inputs, defined review criteria, and a human in the loop. That’s the condition under which AI-assisted QA reduces friction instead of adding it.

AI Use Cases That Create More Chaos Than Clarity

The temptation with AI white-label automation is to apply it wherever work feels slow. But slow is often a signal — of unclear inputs, missing ownership, or undefined standards. AI applied there doesn’t fix the signal. It muffles it.

- AI-generated briefs without human review — confident-sounding inputs that are still structurally vague

- Automated QA without exit criteria — pass/fail becomes inconsistent, AI tests against assumptions, not standards

- AI-managed sprint planning — tasks get assigned, accountability doesn’t travel with them

- Auto-generated status updates — creates appearance of visibility without the substance of it

The pattern is consistent. AI in project delivery earns its place after the system is stable — not as a shortcut to getting there.

How to Evaluate If Your White-Label Partner Has a Real Delivery System

Most white-label partner evaluation processes focus on the wrong things. Portfolio. Pricing. Responsiveness on the intro call. These matter — but they tell you what the partner has done, not how they operate.

A capable partner and a system-driven partner are not the same thing. One delivers good work when conditions are favorable. The other delivers consistently — regardless of project complexity, volume, or how late the stakeholder sends the brief.

Here’s how to tell the difference before you sign anything.

7 Questions to Ask a White-Label Partner Before Signing

These aren’t trick questions. They’re structural ones. A partner with a real delivery system will answer them without hesitation — because the answers are already documented.

- How does work enter your system? — Is there a standardized intake process, or does every project start differently?

- How do you define “done”? — Is there a written definition of done per deliverable type, or is it decided project by project?

- How are dependencies tracked? — What happens when an upstream input — design, content, approvals — isn’t ready when the sprint starts?

- What is your change-control policy? — How do you handle scope changes that arrive after planning?

- How is QA structured? — Are there entry and exit criteria, or does QA happen at the end when everyone is already waiting?

- What does your weekly delivery review look like? — Is there a structured cadence,e or is communication reactive?

- How does your system improve over time? — What does a retro produce, and how do findings update your process?

Red Flags vs Green Flags — Vendor or System Partner?

A single conversation won’t tell you everything. But the signals are usually visible early — if you know what to look for.

| 🔴 Red Flag — Vendor | 🟢 Green Flag — System Partner | |

| Intake | “Send us the brief and we’ll get started” | Structured intake template with defined criteria |

| Definition of Done | Decided per project, per person | Written, agreed, enforced before sprint starts |

| Dependencies | Managed through chat messages | Mapped with named owners and due dates |

| Change Requests | “Sure, we can add that” | Formal change request with documented trade-off |

| QA | Reviewed at delivery | Entry and exit gates built into the workflow |

| Communication | Reactive — updates when chased | Proactive — structured weekly delivery review |

| Improvement | Retros happen after things go wrong | Retros happen weekly and update the process |

| Scaling | Gets harder as volume grows | Gets more predictable as volume grows |

A vendor relationship requires constant management to produce consistent output. The best white-label agency partner is one where the system does that work — so your team isn’t the glue holding everything together every sprint.

What Most Teams Get Wrong in the First 30 Days of a White-Label Engagement

The first 30 days set the tone for everything that follows. The mistakes aren’t dramatic — they’re quiet, consistent, and expensive enough to name before they happen to you.

- Week 1 — The Relationship Starts. The System Doesn’t.

Good kickoff. Everyone aligned. No intake standard, no ownership clarity, no definition of done. Work starts before the system does — and the coordination tax starts with it.

The mistake: Starting work before starting the system.

The fix: Define how work enters, who owns outcomes, and what done looks like — before the first brief goes out.

- Week 2 — Speed Feels Good. Clarity Isn’t There Yet.

First rework cycle appears. The instinct is to fix and move on. That’s exactly what keeps the cycle running.

The mistake: Treating rework as friction instead of a system signal.

The fix: Retro the first rework instance. Find where the gap entered. Fix it before the next sprint starts.

- Week 3 — A Stakeholder Changes Something. Everything Slows Down.

A small change arrives over Slack. The team absorbs it quietly. Late changes become the norm — and the margin quietly disappears with them.

The mistake: Accommodating late changes informally to preserve the relationship.

The fix: Install change-control before week three — not in response to it.

- Week 4 — The Engagement Is Running. The System Still Isn’t.

No delivery review. No retro. No system update. The engagement runs on goodwill — until volume increases and the absence of structure becomes loud.

The mistake: Confusing a functioning relationship with a functioning system. The fix: Run the first formal delivery review. 30 minutes. One system update. That’s when the engagement becomes system-dependent — not relationship-dependent.

Real Delivery Outcomes When the System Works

Frameworks are only convincing until someone asks: but does it actually work?

The answer isn’t a single case study. It’s a pattern — consistent enough across engagements that it stops feeling like a coincidence and starts feeling like cause and effect. Install the system. The delivery changes. Every time.

Here’s what that shift looks like in practice.

Before — What Chaotic White-Label Delivery Looks Like

It rarely looks chaotic from the outside. That’s what makes it expensive.

Timelines are mostly met — but only because someone worked late to make it happen. Briefs are mostly clear — but interpretation gaps surface in QA, not planning. Clients are mostly satisfied — but the margin required to keep them that way keeps shrinking.

Inside the engagement, it feels like permanent mild urgency. Work is always moving but never quite predictable. Every sprint has at least one thing that needed more time than it should have. Every release has at least one thing that came back.

Nobody’s failing. The system just isn’t working — and nobody’s named it yet.

After — What Calm, Scalable White-Label Delivery Looks Like

The shift isn’t dramatic. It’s structural — and it shows up in small, consistent signals before it shows up in the numbers.

Briefs stop coming back for clarification because the intake catches the gaps. Sprints stop blocking mid-cycle because dependencies were mapped before work started. Late changes stop quietly reshaping timelines because change-control makes the trade-off visible before anyone says yes.

And the weekly delivery review — the one that felt like overhead at first — starts producing something tangible. A process update. A template improvement. A rule that prevents the thing that broke last sprint from breaking again.

Volume increases. The noise doesn’t. That’s the signal that the system is working.

The Metric That Signals System Maturity — Rework Rate

Most delivery metrics measure output. Rework rate measures something more useful — whether the system is improving or just running.

Rework rate is simple: what percentage of delivered work comes back for correction? Track it sprint over sprint. A declining rework rate means the intake is getting cleaner, the definitions of done are holding, and the feedback loop is closing. A flat or rising rework rate means the system has a gap that hasn’t been named yet.

In a white-label delivery system context, rework rate matters even more because rework crosses organizational boundaries — it costs both teams time, not just one. A 20–30% reduction in rework, which is a realistic early outcome of installing an Execution OS, doesn’t just improve margins. It improves the relationship. Fewer corrections means fewer uncomfortable conversations. Fewer uncomfortable conversations mean the partnership compounds in the right direction.

That’s the metric worth watching. Not velocity. Not output volume. The rate at which delivered work needs to come back.

Conclusion

Most teams don’t have a white-label problem. They have a clarity problem — and they’ve been solving it by changing the people instead of changing the structure.

The brief was always the gap. The ownership was always unclear. The feedback loop was always missing. And the next partner, however capable, walks into the same arrangement and eventually produces the same result.

The good news is that the structure is designable. Ownership is assignable. Processes are buildable. And once the system is in place, delivery stops being something you manage nervously and starts being something you trust quietly. That’s the shift this guide has been building toward. Not a better vendor. A better operating model.

ZealousWeb exists for organizations that have outgrown the vendor model but haven’t yet built the system to replace it.

We don’t show up with a team and a timesheet. We show up with an operating model — one that’s been designed around how delivery actually breaks, not how it looks in a kickoff deck. Execution systems that remove the heroics. Intelligence layers that amplify what’s already working. And the kind of operational clarity that turns a white-label relationship from a managed risk into a genuine growth lever.

If the delivery is still louder than it should be, we should talk

Work with ZealousWeb

FAQs

Our projects are too varied for a rigid process. Won't a system slow us down?

A system doesn't add friction — it removes the friction already there. The clarifications, the mid-sprint blockers, the late changes. We don't standardize the work. We standardize how the work moves.

We don't have the bandwidth to install a delivery system right now.

That's usually when it's most needed. ZealousWeb designs and installs the operating model alongside your team — not instead of it. The lift is lighter than it looks.

How do we know you're actually system-driven and not just saying the right things?

Ask structural questions. How does work enter? How is it defined? What does a weekly review produce? At ZealousWeb, we don't just answer these — we show you the system behind them.

We're already using AI. Why isn't it solving our consistency problem?

Because AI amplifies what's underneath it. Vague inputs produce confident-looking work that's still wrong — just faster. We install the foundation first. Then AI compounds it.

We've already switched white-label partners twice. Why would a third time be any different?

It won't be — if the only thing changing is the partner. At ZealousWeb, we design the operating model before any work begins. Same partner, completely different structure. That's what changes the outcome.